How To Implement AWS SSB Controls in Terraform - Part 3

Learn how to implement the AWS SSB workload controls using Terraform, starting those related to access and data protection.

Introduction

The AWS Startup Security Baseline (SSB) defines a set of controls that comprise a lean but solid foundation for the security posture of your AWS accounts. In part 1 and part 2 of our blog series, we examined how to implement account controls using Terraform. In this installment, we will look at the workload controls that focus on access to your workload infrastructure and protection of your data in AWS. Let's start with WKLD.01, which is about using IAM roles to provide AWS resources with access to other AWS services.

WKLD.01 – Use IAM Roles for Permissions

The workload control WKLD.01 requires using IAM roles with all supported compute environments to grant them appropriate permissions to access other AWS services and resources.

The use of temporary or short-term credentials via IAM roles and identity federation is significantly more secure than long-term credentials such as IAM users and access keys, which could cause serious harm if they are compromised. In the case of AWS compute services, the instance typically assumes an IAM role using AWS Security Token Service (AWS STS) to generate temporary credentials to gain access as defined by the role permissions. The following is the list of common AWS compute services and the feature that supports IAM role assumption:

| Service | Feature | Terraform resource and argument |

| Amazon EC2 | Instance profile | aws_iam_instance_profile resource and iam_instance_profile in aws_instance |

| Amazon ECS | Task IAM role | task_role_arn in aws_ecs_task_defintion |

| Amazon EKS | IAM roles for service accounts (IRSA) | iam-role-for-service-accounts-eks module |

| Amazon EKS | EKS Pod Identities | aws_eks_pod_identity_association resource |

| AWS App Runner | Instance role | instance_role_arn in aws_apprunner_service |

| AWS Lambda | Lambda execution role | role in aws_lambda_function |

In ACCT.04 in part 1 of the blog series, we have already looked at an example that assigns an execution role that allows fetching CloudWatch metrics and sending emails via SES to a Lambda function. It works very similarly for ECS tasks and App Runner services, so we won't provide more examples for brevity.

For EC2 instances, there is an additional step of creating an instance profile that is associated with the target IAM role and attaching it to the EC2 instances. Here is an example that enables SSM for an EC2 instance:

data "aws_ami" "ubuntu" {

most_recent = true

filter {

name = "name"

values = ["ubuntu/images/hvm-ssd/ubuntu-jammy-22.04-amd64-server-*"]

}

filter {

name = "virtualization-type"

values = ["hvm"]

}

owners = ["099720109477"] # Canonical

}

data "aws_iam_policy" "ssm_managed_instance_core" {

name = "AmazonSSMManagedInstanceCore"

}

resource "aws_iam_role" "ssm" {

name = "SSMDomainJoinRoleForEC2"

assume_role_policy = jsonencode({

Version = "2012-10-17"

Statement = [

{

Action = "sts:AssumeRole"

Effect = "Allow"

Sid = ""

Principal = {

Service = "ec2.amazonaws.com"

}

}

]

})

managed_policy_arns = [

data.aws_iam_policy.ssm_managed_instance_core.arn

]

}

resource "aws_iam_instance_profile" "ssm" {

name = aws_iam_role.ssm.name

role = aws_iam_role.ssm.name

}

resource "aws_instance" "web" {

ami = data.aws_ami.ubuntu.id

iam_instance_profile = aws_iam_instance_profile.ssm.name

instance_type = "t3.micro"

subnet_id = data.aws_subnet.private.id

}

For EKS with IRSA, the iam-role-for-service-accounts-eks submodule of the terraform-aws-iam module can be useful especially if you use the terraform-aws-eks module to manage your EKS resources. Refer to the module documentation as linked above for details.

For EKS with Pod Identities, the blog post AWS EKS: From IRSA to Pod Identity With Terraform by Marco Sciatta provides a decent walkthrough on how to configure it in Terraform.

Don't forget to apply least privilege permissions as a best practice!

WKLD.02 – Use Resource-Based Policies

The workload control WKLD.02 recommends using resource-based policies to provide additional access control at the resource level.

In the situation where both types of policies are set, the permission evaluation logic takes the union of allow permissions from the identity-based permissions of a user and the resource-based permission of the resource being access to determine the effective access level. Explicit deny permissions from either policies will take precedence to prevent access as usual.

Resource-based policies are especially effective when combined with policy conditions with global condition context keys such as aws:PrincipalOrgID, which specifies the organization ID of the principal.

Many AWS resources support resource-based policies - here are a few common examples:

| Service | Feature | Terraform resource |

| Amazon S3 | Bucket policy | aws_s3_bucket_policy |

| Amazon SQS | Queue policy | aws_sqs_queue_policy |

| AWS KMS | Key policy | aws_kms_key_policy |

For the full list of AWS services that support resource-based policies, refer to the table in AWS services that work with IAM.

To define resource-based policies in Terraform, let's look at the following example that provisions an S3 bucket that uses a KMS customer-managed key (CMK) with both an S3 bucket policy and a KMS key policy:

data "aws_caller_identity" "this" {}

locals {

account_id = data.aws_caller_identity.this.account_id

}

resource "aws_iam_role" "top_secret_reader" {

name = "TopSecretReaderRole"

assume_role_policy = jsonencode({

Version = "2012-10-17"

Statement = [

{

Action = "sts:AssumeRole"

Effect = "Allow"

Principal = {

AWS = "arn:aws:iam::${local.account_id}:root"

}

}

]

})

inline_policy {

name = "ListAllMyBuckets"

policy = jsonencode({

Statement = [{

Version = "2012-10-17"

Action = "s3:ListAllMyBuckets"

Effect = "Allow"

Resource = "*"

}

]

})

}

}

resource "aws_kms_key" "this" {

description = "KMS key for S3 SSE"

}

resource "aws_kms_alias" "this" {

name = "alias/s3_sse_key"

target_key_id = aws_kms_key.this.key_id

}

resource "aws_kms_key_policy" "this" {

key_id = aws_kms_key.this.id

policy = jsonencode({

Statement = [

{

Sid = "Enable IAM user and AWS service permissions"

Action = "kms:*"

Effect = "Allow"

Principal = {

AWS = "arn:aws:iam::${local.account_id}:root"

}

Resource = "*"

},

{

Sid = "Allow use of the key"

Action = [

"kms:Encrypt",

"kms:Decrypt",

"kms:ReEncrypt*",

"kms:GenerateDataKey*",

"kms:DescribeKey"

]

Effect = "Allow"

Principal = {

AWS = "arn:aws:iam::${local.account_id}:role/TopSecretReaderRole"

}

Resource = "*"

}

]

Version = "2012-10-17"

})

}

resource "aws_s3_bucket" "this" {

bucket = "top-secret-bucket-${local.account_id}"

}

resource "aws_s3_bucket_server_side_encryption_configuration" "this" {

bucket = aws_s3_bucket.this.id

rule {

apply_server_side_encryption_by_default {

kms_master_key_id = aws_kms_key.this.key_id

sse_algorithm = "aws:kms"

}

}

}

resource "aws_s3_bucket_policy" "this" {

bucket = aws_s3_bucket.this.id

policy = jsonencode({

Statement = [

{

Sid = "Allow listing of the bucket"

Action = [

"s3:ListBucket",

"s3:GetBucketLocation"

]

Effect = "Allow"

Principal = {

AWS = "arn:aws:iam::${local.account_id}:role/TopSecretReaderRole"

}

Resource = aws_s3_bucket.this.arn

},

{

Sid = "Allow read of objects in the bucket"

Action = [

"s3:GetObject",

"s3:GetObjectVersion"

]

Effect = "Allow"

Principal = {

AWS = "arn:aws:iam::${local.account_id}:role/TopSecretReaderRole"

}

Resource = "${aws_s3_bucket.this.arn}/*"

}

]

Version = "2012-10-17"

})

}

A role called TopSecretReaderRole has minimal permission assigned via an identity-based policy, so that we can defer access control via resource-based policy. The KMS key policy provides access to use the key for decryption, while the S3 bucket policy provides read-only access to the bucket and its objects that are encrypted with the same KMS key. To test the access, you can first upload a file to the S3 bucket with an IAM user/role that has access, then assume TopSecretReaderRole and verify that you can download the file from the S3 bucket.

Once again, ensure that you practice the least privilege principle when defining your resource-based policies.

WKLD.03 – Use Ephemeral Secrets or a Secrets-Management Service

The workload control WKLD.03 recommends using either ephemeral secrets or a secrets-management service for applications in AWS.

There are two main AWS services that support secret management:

Secrets Manager is a comprehensive secrets management service that provides features such as secrets rotation, monitoring, and auditing for compliance. Many AWS services integrate with Secrets Manager to store and retrieve credentials and sensitive data.

Meanwhile, Parameter Store offers a simple option to store secrets alongside other related parameters. While it lacks features such as secrets rotation and have smaller size limit, Parameter Store is free to use and is a great option for storing application and service settings.

Personally I don't find it natural to manage secrets in Terraform and generally avoid it. I would instead create secrets outside Terraform and use the aws_secretsmanager_secret data source and the aws_secretsmanager_secret_version data source to retrieve secrets for use in resource arguments. For example:

data "aws_secretsmanager_secret" "fsx_init_admin_pwd" {

name = "aws/fsx/my-ontap-fs/initial-admin-password"

}

data "aws_secretsmanager_secret_version" "fsx_init_admin_pwd" {

secret_id = data.aws_secretsmanager_secret.fsx_init_admin_pwd.id

}

locals {

# Use local.fsx_init_admin_pwd to set the fsx_admin_password arg of the aws_fsx_ontap_file_system resource

fsx_init_admin_pwd = jsondecode(data.aws_secretsmanager_secret_version.fsx_init_admin_pwd.secret_string)["password"]

}

As an additional reference, the AWS prescriptive guidance Securing sensitive data by using AWS Secrets Manager and HashiCorp Terraform provides some general best practices and considerations.

As for Parameter Store, I also take the same approach and would prefer managing secure parameters outside of Terraform and use the aws_ssm_parameter data source to retrieve them for use. Here is an example:

data "aws_ssm_parameter" "fsx_init_admin_pwd" {

name = "/fsx/my-ontap-fs/initial-admin-password"

}

locals {

# Use local.fsx_init_admin_pwd to set the fsx_admin_password arg of the aws_fsx_ontap_file_system resource

fsx_init_admin_pwd = data.aws_ssm_parameter.slack_token.insecure_value

}

WKLD.04 – Protect Application Secrets

The workload control WKLD.04 implores that you incorporate checks for exposed secrets as part of your commit and code review processes. This is outside the scope of Terraform, so we will move on to the next control.

WKLD.05 – Detect and Remediate Exposed Secrets

The workload control WKLD.05 recommends deploying a solution to detect application secrets in source code.

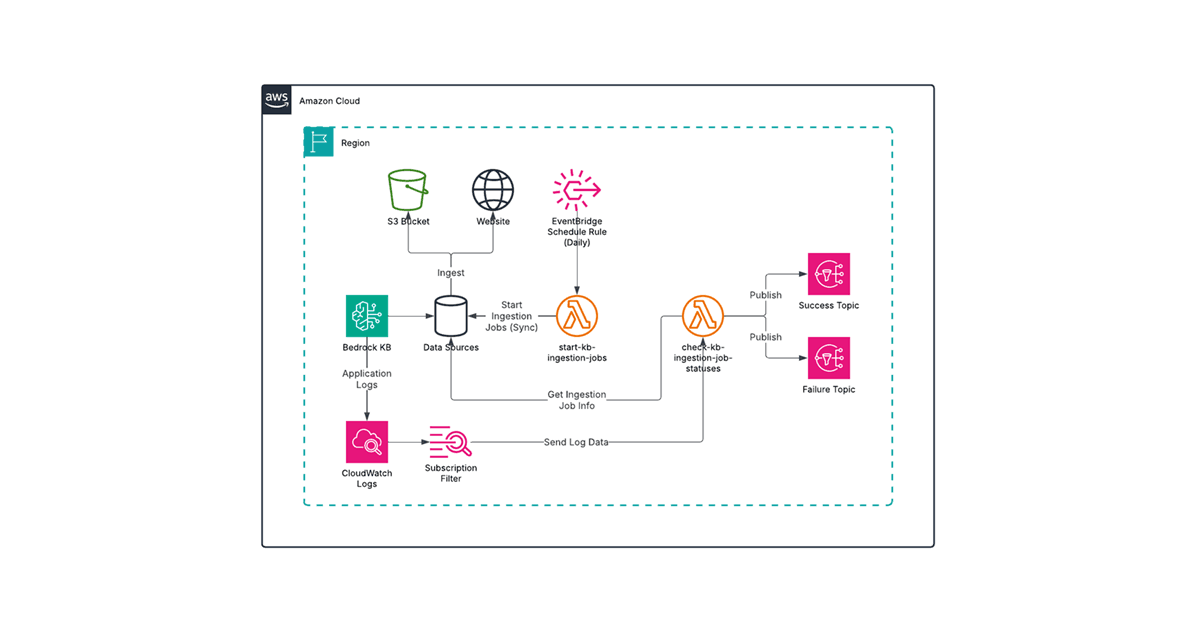

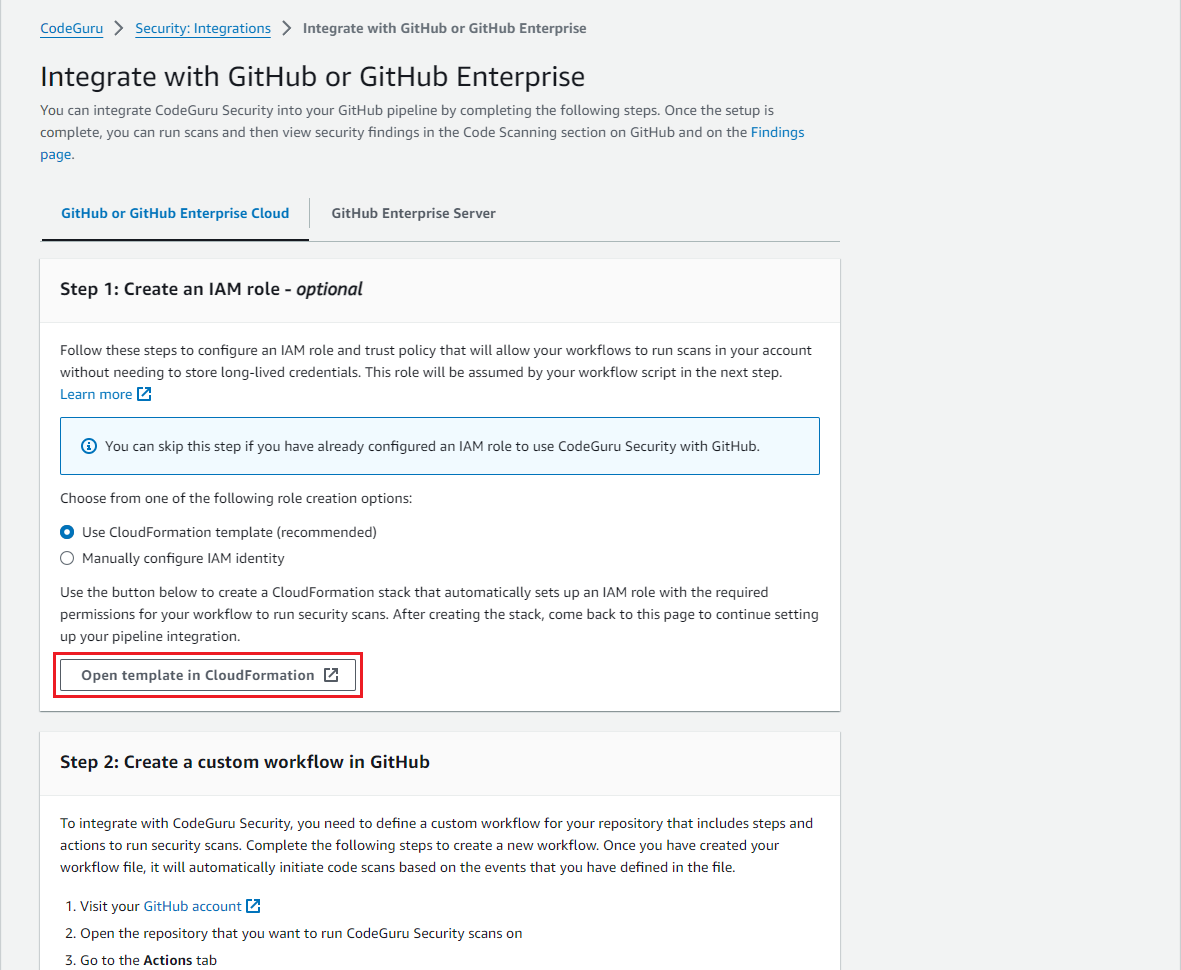

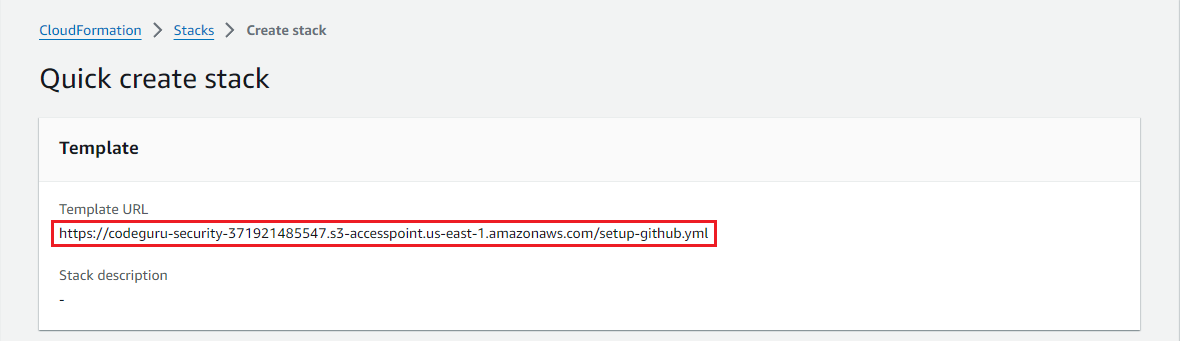

Amazon CodeGuru Security, a feature of Amazon CodeGuru, is a static application security tool that uses machine learning to detect security policy violations and vulnerabilities. In particular, it can detect unprotected secrets. The service is "enabled" by configuring a CI pipeline for supported platforms, including GitHub, BitBucket, GitLab, and AWS CodePipeline. The third-party solutions require an OIDC provider and an IAM role to be created, which CodeGuru Security provides CloudFormation templates for. So you can either convert them into Terraform for deployment, or deploy them directly in Terraform using the aws_cloudformation_stack resource. Here is an example for GitHub integration:

resource "aws_cloudformation_stack" "codeguru_security_github" {

name = "codeguru-security-github"

# The template URL is obtained

template_url = "https://codeguru-security-371921485547.s3-accesspoint.us-east-1.amazonaws.com/setup-github.yml"

parameters = {

Repository = "my-org/my-repo"

}

capabilities = ["CAPABILITY_NAMED_IAM"]

}

The template URL is obtained in the AWS Management Console by clicking on the Open template in CloudFormation button once you select an integration, as shown in the screenshots below:

WKLD.06 – Use Systems Manager Instead of SSH or RDP

The workload control WKLD.06 recommends the use of AWS Systems Manager Session Manager to securely access EC2 instances instead of placing them in a public subnet, or using a jump box or a bastion host.

With Session Manager, a user with the necessary IAM permissions can connect to an EC2 instance (to be precise, the SSM Agent running on the instance) using the browser-based shell in the AWS Management Console or the AWS CLI with the Session Manager plugin. This method only requires outbound traffic to the SSM service endpoints (either through the internet or VPC endpoints), and does not require enabling SSH and RDP traffic from the internet or from a jump box or bastion host. This results in better security and governance.

To enable Sessions Manager on an EC2 instance in Terraform, you need to create an instance profile with the AWS-managed policy AmazonSSMManagedInstanceCore attached and then attach it to the EC2 instance. A basic example is already provided in the WKLD.01 section above, so please refer to that. Note that this example assumes that the EC2 instance is deployed to a private subnet that routes outbound internet traffic to a NAT gateway in the same VPC.

If you prefer that EC2 instances communicate with SSM only within the AWS network, you can define the VPC endpoints required by SSM in the VPC and subnet where the EC2 instances reside. This can be achieved in Terraform using the aws_vpc_endpoint resource as follows:

# Example assumes that data.aws_vpc.this and data.aws_subnet.private are already defined

data "aws_region" "this" {}

locals {

region = data.aws_region.this.name

}

resource "aws_security_group" "ssm_sg" {

name = "ssm-sg"

description = "Allow TLS inbound To AWS Systems Manager Session Manager"

vpc_id = data.aws_vpc.this.id

ingress {

description = "HTTPS from VPC"

from_port = 443

to_port = 443

protocol = "tcp"

cidr_blocks = [data.aws_vpc.this.cidr_block]

}

egress {

description = "Allow All Egress"

from_port = 0

to_port = 0

protocol = "-1"

cidr_blocks = ["0.0.0.0/0"]

}

}

resource "aws_vpc_endpoint" "ssm" {

vpc_id = data.aws_vpc.this.id

subnet_ids = [data.aws_subnet.private.id]

service_name = "com.amazonaws.${local.region}.ssm"

vpc_endpoint_type = "Interface"

security_group_ids = [

aws_security_group.ssm_sg.id

]

private_dns_enabled = true

}

resource "aws_vpc_endpoint" "ec2messages" {

vpc_id = data.aws_vpc.this.id

subnet_ids = [data.aws_subnet.private.id]

service_name = "com.amazonaws.${local.region}.ec2messages"

vpc_endpoint_type = "Interface"

security_group_ids = [

aws_security_group.ssm_sg.id,

]

private_dns_enabled = true

}

resource "aws_vpc_endpoint" "ssmmessages" {

vpc_id = data.aws_vpc.this.id

subnet_ids = [data.aws_subnet.private.id]

service_name = "com.amazonaws.${local.region}.ssmmessages"

vpc_endpoint_type = "Interface"

security_group_ids = [

aws_security_group.ssm_sg.id,

]

private_dns_enabled = true

}

WKLD.07 – Log Data Events for Select S3 Buckets

The workload control WKLD.07 recommends logging data events for S3 buckets that contain sensitive data in CloudTrail.

By default, data events are not captured in a CloudTrail trail and must be explicitly enabled. Since the volume of data events can be high depending on the access pattern of the resource, logging data events can get expensive quickly. It is therefore recommended that you log data events only for resources that contain sensitive data that warrants more scrutiny. Note that CloudTrail can also capture data events for other AWS services as listed in the AWS CloudTrail User Guide.

To demonstrate how to enable CloudTrail data event logging in Terraform, we will extend the basic example from ACCT.07 in part 2 of the blog series and capture data events from an S3 bucket using an advanced event selector. Here is the Terraform configuration:

data "aws_caller_identity" "this" {}

data "aws_region" "this" {}

locals {

account_id = data.aws_caller_identity.current.account_id

region = data.aws_region.this.name

}

resource "aws_s3_bucket" "top_secret" {

bucket = "top-secret-${local.account_id}-${local.region}"

}

# Note: Bucket versioning and server-side encryption are not shown for brevity

resource "aws_s3_bucket" "cloudtrail" {

bucket = "aws-cloudtrail-logs-${local.account_id}-${local.region}"

}

resource "aws_s3_bucket_policy" "cloudtrail" {

bucket = aws_s3_bucket.cloudtrail.id

policy = <<-EOT

{

"Version": "2012-10-17",

"Statement": [

{

"Sid": "AWSCloudTrailAclCheck",

"Effect": "Allow",

"Principal": {

"Service": "cloudtrail.amazonaws.com"

},

"Action": "s3:GetBucketAcl",

"Resource": "${aws_s3_bucket.cloudtrail.arn}"

},

{

"Sid": "AWSCloudTrailWrite",

"Effect": "Allow",

"Principal": {

"Service": "cloudtrail.amazonaws.com"

},

"Action": "s3:PutObject",

"Resource": "${aws_s3_bucket.cloudtrail.arn}/AWSLogs/${local.account_id}/*",

"Condition": {

"StringEquals": {

"s3:x-amz-acl": "bucket-owner-full-control"

}

}

}

]

}

EOT

}

resource "aws_cloudtrail" "this" {

name = "aws-cloudtrail-logs-${local.account_id}-${local.region}"

s3_bucket_name = aws_s3_bucket.cloudtrail.id

enable_log_file_validation = true

is_multi_region_trail = true

advanced_event_selector {

field_selector {

field = "eventCategory"

equals = ["Management"]

}

}

advanced_event_selector {

name = "Log all S3 objects events for the top secret bucket"

field_selector {

field = "eventCategory"

equals = ["Data"]

}

field_selector {

field = "resources.ARN"

starts_with = ["${aws_s3_bucket.top_secret.arn}/"]

}

field_selector {

field = "resources.type"

equals = ["AWS::S3::Object"]

}

}

}

WKLD.08 – Encrypt Amazon EBS Volumes

The workload control WKLD.08 encrypting all Amazon EBS volumes.

AWS provides server-side encryption to many services that store data, so that end-users can practice encryption at rest with minimal effort. For EBS volumes, there are two methods to encrypt EBS volumes:

Enable EBS encryption by default at the regional level.

Enable EBS volume encryption when the EBS volume is created either individually or as part of provisioning an EC2 instance.

In both cases, you can either use the AWS-managed key aws/ebs or provide your own KMS CMK. The latter supports seamless key rotation for added security and compliance.

To enable EBS encryption by default using Terraform, use the aws_ebs_encryption_by_default resource, and optionally the ebs_default_kms_key resource if you wish to use a KMS CMK, as follows:

resource "aws_kms_key" "ebs" {

description = "CMK for EBS encryption"

enable_key_rotation = true

}

resource "aws_ebs_encryption_by_default" "this" {

enabled = true

}

# Optional - the AWS-managed key will be used if this resource is not used

resource "aws_ebs_default_kms_key" "this" {

key_arn = aws_kms_key.ebs.arn

}

To encrypt the EBS volumes of an EC2 instance, you can set the encrypted argument and optionally the kms_key_id argument in the *_block_device configuration blocks in the aws_instance resource. Likewise, these arguments are also applicable to the aws_ebs_volume resource if you are provisioning EBS volumes individually. Here is a basic example that uses the default AWS-managed key for the root block device and an additional volume:

data "aws_ami" "ubuntu" {

most_recent = true

filter {

name = "name"

values = ["ubuntu/images/hvm-ssd/ubuntu-jammy-22.04-amd64-server-*"]

}

filter {

name = "virtualization-type"

values = ["hvm"]

}

owners = ["099720109477"] # Canonical

}

resource "aws_instance" "app_server" {

ami = data.aws_ami.ubuntu.id

instance_type = "t3.large"

subnet_id = data.aws_subnet.private.id

root_block_device {

volume_type = "gp3"

volume_size = 20

delete_on_termination = true

encrypted = true

}

}

resource "aws_ebs_volume" "app_server_data" {

availability_zone = "us-east-1a"

type = "gp3"

size = 50

encrypted = true

}

resource "aws_volume_attachment" "app_server_data" {

device_name = "xvdb"

volume_id = aws_ebs_volume.app_server_data.id

instance_id = aws_instance.app_server.id

}

WKLD.09 – Encrypt Amazon RDS Databases

The workload control WKLD.09 requires encrypting all Amazon RDS databases.

Encrypting an RDS DB instance is very similar to the encrypting EBS volumes. You can either use the default AWS-managed KMS key aws/rds or supply a KMS CMK. In Terraform, you can set the storage_encrypted argument and optionally the kms_key_id argument in the aws_db_instance resource. Here is a basic example that uses the default AWS-managed key:

resource "aws_db_instance" "app_db" {

allocated_storage = 50

db_name = "appdb"

engine = "mysql"

engine_version = "8.0"

instance_class = "db.t3.large"

manage_master_user_password = true

parameter_group_name = "default.mysql8.0"

skip_final_snapshot = true

storage_encrypted = true

username = "mysqladm"

}

Summary

If you have followed this blog post this far, great job! This was a lot of information, but it is important to fully understand the best practices in controlling access and safeguarding your workload and their data. In the next and last installment of the blog series, we will wrap up with the remaining workload-level controls that focus on network security. Please look forward to it and check out other posts in the Avangards Blog.