How To Manage an Amazon Bedrock Knowledge Base Using Terraform

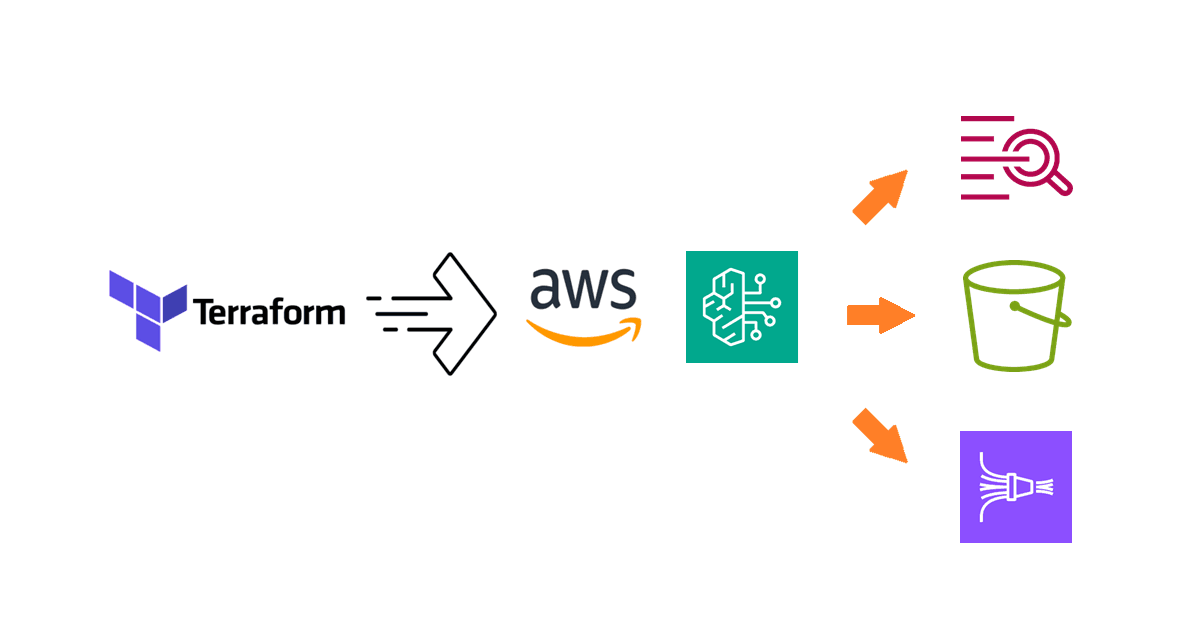

Learn how to use the new Knowledge Bases for Amazon Bedrock Terraform resources to provision and attach a knowledge base to an agent.

Introduction

In the previous blog post, Adding an Amazon Bedrock Knowledge Base to the Forex Rate Assistant, I explained how to create a Bedrock knowledge base and associate it with a Bedrock agent using the AWS Management Console, with a forex rate assistant as the use case example.

We also covered how to manage Bedrock agents with Terraform in another blog post, How To Manage an Amazon Bedrock Agent Using Terraform. In this blog post, we will extend that setup to also manage knowledge bases in Terraform. To begin, we will first examine the relevant AWS resources in the AWS Management Console.

Taking inventory of the required resources

Upon examining the knowledge base we previously built, we find that it comprises the following AWS resources:

The knowledge base itself;

The knowledge base service role that provides the knowledge base access to Amazon Bedrock models, data sources in S3, and the vector index;

The OpenSearch Serverless policies, collection, and the vector index;

The S3 bucket that acts as the data source

With this list of resources, along with those required by the agent to which the knowledge base will be attached, we can begin creating the Terraform configuration. Before diving into the setup, let's first take care of the prerequisites.

Defining variables for the configuration

For better manageability, we define some variables in a variables.tf file that we will reference throughout the Terraform configuration:

variable "kb_s3_bucket_name_prefix" {

description = "The name prefix of the S3 bucket for the data source of the knowledge base."

type = string

default = "forex-kb"

}

variable "kb_oss_collection_name" {

description = "The name of the OSS collection for the knowledge base."

type = string

default = "bedrock-knowledge-base-forex-kb"

}

variable "kb_model_id" {

description = "The ID of the foundational model used by the knowledge base."

type = string

default = "amazon.titan-embed-text-v1"

}

variable "kb_name" {

description = "The knowledge base name."

type = string

default = "ForexKB"

}

Defining the S3 and IAM resources

The knowledge base requires a service role, which can be created using the aws_iam_role resource as follows:

data "aws_caller_identity" "this" {}

data "aws_partition" "this" {}

data "aws_region" "this" {}

locals {

account_id = data.aws_caller_identity.this.account_id

partition = data.aws_partition.this.partition

region = data.aws_region.this.name

region_name_tokenized = split("-", local.region)

region_short = "${substr(local.region_name_tokenized[0], 0, 2)}${substr(local.region_name_tokenized[1], 0, 1)}${local.region_name_tokenized[2]}"

}

resource "aws_iam_role" "bedrock_kb_forex_kb" {

name = "AmazonBedrockExecutionRoleForKnowledgeBase_${var.kb_name}"

assume_role_policy = jsonencode({

Version = "2012-10-17"

Statement = [

{

Action = "sts:AssumeRole"

Effect = "Allow"

Principal = {

Service = "bedrock.amazonaws.com"

}

Condition = {

StringEquals = {

"aws:SourceAccount" = local.account_id

}

ArnLike = {

"aws:SourceArn" = "arn:${local.partition}:bedrock:${local.region}:${local.account_id}:knowledge-base/*"

}

}

}

]

})

}

With the service role in place, we can now proceed to define the corresponding IAM policy. As we define the configuration for creating resources that the knowledge base service role needs to access, we will consequently define the corresponding IAM policy using the aws_iam_role_policy resource. First, we create the IAM policy that provides access to the embeddings model. Since the foundation model is not created but referenced, we can use the aws_bedrock_foundation_model data source to obtain the ARN which we need:

data "aws_bedrock_foundation_model" "kb" {

model_id = var.kb_model_id

}

resource "aws_iam_role_policy" "bedrock_kb_forex_kb_model" {

name = "AmazonBedrockFoundationModelPolicyForKnowledgeBase_${var.kb_name}"

role = aws_iam_role.bedrock_kb_forex_kb.name

policy = jsonencode({

Version = "2012-10-17"

Statement = [

{

Action = "bedrock:InvokeModel"

Effect = "Allow"

Resource = data.aws_bedrock_foundation_model.kb.model_arn

}

]

})

}

Next, we create the Amazon S3 bucket that acts as the data source for the knowledge base using the aws_s3_bucket resource. To adhere to security best practices, we also enable S3-SSE using the aws_s3_bucket_server_side_encryption_configuration resource and bucket versioning with the aws_s3_bucket_versioning resource as follows:

resource "aws_s3_bucket" "forex_kb" {

bucket = "${var.kb_s3_bucket_name_prefix}-${local.region_short}-${local.account_id}"

force_destroy = true

}

resource "aws_s3_bucket_server_side_encryption_configuration" "forex_kb" {

bucket = aws_s3_bucket.forex_kb.id

rule {

apply_server_side_encryption_by_default {

sse_algorithm = "AES256"

}

}

}

resource "aws_s3_bucket_versioning" "forex_kb" {

bucket = aws_s3_bucket.forex_kb.id

versioning_configuration {

status = "Enabled"

}

depends_on = [aws_s3_bucket_server_side_encryption_configuration.forex_kb]

}

Now that the S3 bucket is available, we can create the IAM policy that gives the knowledge base service role access to files for indexing:

resource "aws_iam_role_policy" "bedrock_kb_forex_kb_s3" {

name = "AmazonBedrockS3PolicyForKnowledgeBase_${var.kb_name}"

role = aws_iam_role.bedrock_kb_forex_kb.name

policy = jsonencode({

Version = "2012-10-17"

Statement = [

{

Sid = "S3ListBucketStatement"

Action = "s3:ListBucket"

Effect = "Allow"

Resource = aws_s3_bucket.forex_kb.arn

Condition = {

StringEquals = {

"aws:PrincipalAccount" = local.account_id

}

} },

{

Sid = "S3GetObjectStatement"

Action = "s3:GetObject"

Effect = "Allow"

Resource = "${aws_s3_bucket.forex_kb.arn}/*"

Condition = {

StringEquals = {

"aws:PrincipalAccount" = local.account_id

}

}

}

]

})

}

Defining the OpenSearch Serverless policy resources

The Bedrock console offers a quick create option that provisions an OpenSearch Serverless vector store on our behalf as the knowledge base is created. Since the documentation for creating the vector index in OpenSearch Serverless is a bit open-ended, we can refer to the resources from the quick create option to supplement.

First, we configure permissions by defining a data access policy for the vector search collection. The data access policy from the quick create option is defined as follows:

This data access policy provides read and write permissions to the vector search collection and its indices to the knowledge base execution role and the creator of the policy.

Using the corresponding aws_opensearchserverless_access_policy resource, we can define the policy as follows:

resource "aws_opensearchserverless_access_policy" "forex_kb" {

name = var.kb_oss_collection_name

type = "data"

policy = jsonencode([

{

Rules = [

{

ResourceType = "index"

Resource = [

"index/${var.kb_oss_collection_name}/*"

]

Permission = [

"aoss:CreateIndex",

"aoss:DeleteIndex",

"aoss:DescribeIndex",

"aoss:ReadDocument",

"aoss:UpdateIndex",

"aoss:WriteDocument"

]

},

{

ResourceType = "collection"

Resource = [

"collection/${var.kb_oss_collection_name}"

]

Permission = [

"aoss:CreateCollectionItems",

"aoss:DescribeCollectionItems",

"aoss:UpdateCollectionItems"

]

}

],

Principal = [

aws_iam_role.bedrock_kb_forex_kb.arn,

data.aws_caller_identity.this.arn

]

}

])

}

Note that aoss:DeleteIndex was added to the list because this is required for cleanup by Terraform via terraform destroy.

Next, we need an encryption policy that assigns an encryption key to a collection for data protection at rest. The encryption policy from the quick create option is defined as follows:

This encryption policy simply assigns an AWS-owned key to the vector search collection. Using the aws_opensearchserverless_security_policy resource with an encryption type, we can define the policy as follows:

resource "aws_opensearchserverless_security_policy" "forex_kb_encryption" {

name = var.kb_oss_collection_name

type = "encryption"

policy = jsonencode({

Rules = [

{

Resource = [

"collection/${var.kb_oss_collection_name}"

]

ResourceType = "collection"

}

],

AWSOwnedKey = true

})

}

Lastly, we need a network policy which defines whether a collection is accessible publicly or privately. The network policy from the quick create option is defined as follows:

his network policy allows public access to the vector search collection's API endpoint and dashboard over the internet. Using the aws_opensearchserverless_security_policy resource with an network type, we can define the policy as follows:

resource "aws_opensearchserverless_security_policy" "forex_kb_network" {

name = var.kb_oss_collection_name

type = "network"

policy = jsonencode([

{

Rules = [

{

ResourceType = "collection"

Resource = [

"collection/${var.kb_oss_collection_name}"

]

},

{

ResourceType = "dashboard"

Resource = [

"collection/${var.kb_oss_collection_name}"

]

}

]

AllowFromPublic = true

}

])

}

With the prerequisite policies in place, we can now create the vector search collection and the index.

Defining the OpenSearch Serverless collection and index resources

Creating the collection in Terraform is straightforward using the aws_opensearchserverless_collection resource:

resource "aws_opensearchserverless_collection" "forex_kb" {

name = var.kb_oss_collection_name

type = "VECTORSEARCH"

depends_on = [

aws_opensearchserverless_access_policy.forex_kb,

aws_opensearchserverless_security_policy.forex_kb_encryption,

aws_opensearchserverless_security_policy.forex_kb_network

]

}

The knowledge base service role also needs access to the collection, which we can provide using the aws_iam_role_policy similar to before:

resource "aws_iam_role_policy" "bedrock_kb_forex_kb_oss" {

name = "AmazonBedrockOSSPolicyForKnowledgeBase_${var.kb_name}"

role = aws_iam_role.bedrock_kb_forex_kb.name

policy = jsonencode({

Version = "2012-10-17"

Statement = [

{

Action = "aoss:APIAccessAll"

Effect = "Allow"

Resource = aws_opensearchserverless_collection.forex_kb.arn

}

]

})

}

Creating the index in Terraform is however more complex, since it is not an AWS resource but an OpenSearch construct. Looking at CloudTrail events, there wasn't any event that correspond to an AWS API call that would create the index. However, observing the network traffic in the Bedrock console did reveal a request to the OpenSearch collection's API endpoint to create the index. This is what we want to port to Terraform.

Luckily, there is an OpenSearch Provider maintained by OpenSearch that we can use. To connect to the vector search collection, we provide the endpoint URL and credentials in the provider block. The provider has first-class support for AWS, so credentials can be provided implicitly similar to the Terraform AWS Provider. The resulting provider definition is as follows:

provider "opensearch" {

url = aws_opensearchserverless_collection.forex_kb.collection_endpoint

healthcheck = false

}

Note that the healthcheck argument is set to false because the client health check does not really work with OpenSearch Serverless.

To get the index definition, we can examine the collection in the OpenSearch Service Console:

We can create the index using the opensearch_index resource with the same specifications:

resource "opensearch_index" "forex_kb" {

name = "bedrock-knowledge-base-default-index"

number_of_shards = "2"

number_of_replicas = "0"

index_knn = true

index_knn_algo_param_ef_search = "512"

mappings = <<-EOF

{

"properties": {

"bedrock-knowledge-base-default-vector": {

"type": "knn_vector",

"dimension": 1536,

"method": {

"name": "hnsw",

"engine": "faiss",

"parameters": {

"m": 16,

"ef_construction": 512

},

"space_type": "l2"

}

},

"AMAZON_BEDROCK_METADATA": {

"type": "text",

"index": "false"

},

"AMAZON_BEDROCK_TEXT_CHUNK": {

"type": "text",

"index": "true"

}

}

}

EOF

force_destroy = true

depends_on = [aws_opensearchserverless_collection.forex_kb]

}

Note that the dimension is set to 1536, which is the value required for the Titan G1 Embeddings - Text model.

Before we move on, you must know about an issue with the Terraform OpenSearch provider that caused me a lot of headache. When I was testing the Terraform configuration, the opensearch_index resource kept failing because the provider could not seemingly authenticate against the collection's endpoint URL. After a long debugging session, I was able to find a GitHub issue in the Terraform OpenSearch Provider repository that mentions the cryptic "EOF" error that was present. The issue mentions that the bug is related to OpenSearch Serverless and an earlier provider version, v2.2.0, does not have the problem. Consequently, I was able to work around the problem by using this specific version of the provider:

terraform {

required_providers {

aws = {

source = "hashicorp/aws"

version = "~> 5.48"

}

opensearch = {

source = "opensearch-project/opensearch"

version = "= 2.2.0"

}

}

required_version = "~> 1.5"

}

Hopefully letting you in on this tip will save you hours of troubleshooting.

Defining the knowledge base resource

With all dependent resources in place, we can now proceed to create the knowledge base. However, there is the matter of eventual consistency with IAM resources that we first need to address. Since Terraform creates resources in quick succession, there is a chance that the configuration of the knowledge base service role is not propagated across AWS endpoints before it is used by the knowledge base during its creation, resulting in temporary permission issues. What I observed during testing is that the permission error is usually related to the OpenSearch Serverless collection.

To mitigate this, we add a delay using the time_sleep resource in the Time Provider. The following configuration will add a 20-second delay after the IAM policy for the OpenSearch Serverless collection is created:

resource "time_sleep" "aws_iam_role_policy_bedrock_kb_forex_kb_oss" {

create_duration = "20s"

depends_on = [aws_iam_role_policy.bedrock_kb_forex_kb_oss]

}

Now we can create the knowledge base using the aws_bedrockagent_knowledge_base resource as follows:

resource "aws_bedrockagent_knowledge_base" "forex_kb" {

name = var.kb_name

role_arn = aws_iam_role.bedrock_kb_forex_kb.arn

knowledge_base_configuration {

vector_knowledge_base_configuration {

embedding_model_arn = data.aws_bedrock_foundation_model.kb.model_arn

}

type = "VECTOR"

}

storage_configuration {

type = "OPENSEARCH_SERVERLESS"

opensearch_serverless_configuration {

collection_arn = aws_opensearchserverless_collection.forex_kb.arn

vector_index_name = "bedrock-knowledge-base-default-index"

field_mapping {

vector_field = "bedrock-knowledge-base-default-vector"

text_field = "AMAZON_BEDROCK_TEXT_CHUNK"

metadata_field = "AMAZON_BEDROCK_METADATA"

}

}

}

depends_on = [

aws_iam_role_policy.bedrock_kb_forex_kb_model,

aws_iam_role_policy.bedrock_kb_forex_kb_s3,

opensearch_index.forex_kb,

time_sleep.aws_iam_role_policy_bedrock_kb_forex_kb_oss

]

}

Note that time_sleep.aws_iam_role_policy_bedrock_kb_forex_kb_oss is in the depends_on list - this is how the aforementioned delay is enforced before the knowledge base is created by Terraform.

We also need to add the data source to the knowledge base using the aws_bedrock_data_source resource as follows:

resource "aws_bedrockagent_data_source" "forex_kb" {

knowledge_base_id = aws_bedrockagent_knowledge_base.forex_kb.id

name = "${var.kb_name}DataSource"

data_source_configuration {

type = "S3"

s3_configuration {

bucket_arn = aws_s3_bucket.forex_kb.arn

}

}

}

Voila! We have created a stand-alone Bedrock knowledge base using Terraform! All that remains is to attach the knowledge base to an agent (the forex assistant in our case) to extend the solution.

Integrating the knowledge base and agent resources

For your convenience, you can use the Terraform configuration from the blog post How To Manage an Amazon Bedrock Agent Using Terraform to create the rate assistant. It can be found in the 1_basic directory in this GitHub repository.

Once you incorporate this Terraform configuration with the knowledge base you’ve been developing, we use the new aws_bedrockagent_agent_knowledge_base_association resource to associate the knowledge base with the agent:

resource "aws_bedrockagent_agent_knowledge_base_association" "forex_kb" {

agent_id = aws_bedrockagent_agent.forex_asst.id

description = file("${path.module}/prompt_templates/kb_instruction.txt")

knowledge_base_id = aws_bedrockagent_knowledge_base.forex_kb.id

knowledge_base_state = "ENABLED"

}

For better organization, we will keep the knowledge base description in a text file called kb_instruction.txt in the prompt_templates folder. The file contains the following text:

Use this knowledge base to retrieve information on foreign currency exchange, such as the FX Global Code.

Lastly, we explained in the previous blog post that the agent must be prepared after changes are made. We used a null_resource to trigger the prepare action, so we will continue to use the same strategy for the knowledge base association by adding an explicit dependency:

resource "null_resource" "forex_asst_prepare" {

triggers = {

forex_api_state = sha256(jsonencode(aws_bedrockagent_agent_action_group.forex_api))

forex_kb_state = sha256(jsonencode(aws_bedrockagent_knowledge_base.forex_kb))

}

provisioner "local-exec" {

command = "aws bedrock-agent prepare-agent --agent-id ${aws_bedrockagent_agent.forex_asst.id}"

}

depends_on = [

aws_bedrockagent_agent.forex_asst,

aws_bedrockagent_agent_action_group.forex_api,

aws_bedrockagent_knowledge_base.forex_kb

]

}

Testing the configuration

Now, the moment of truth. We can apply the full Terraform configuration and make sure that it is working properly. My run took several minutes, with the majority of the time spent on creating the OpenSearch Serverless collection. Here is an excerpt of the output for reference:

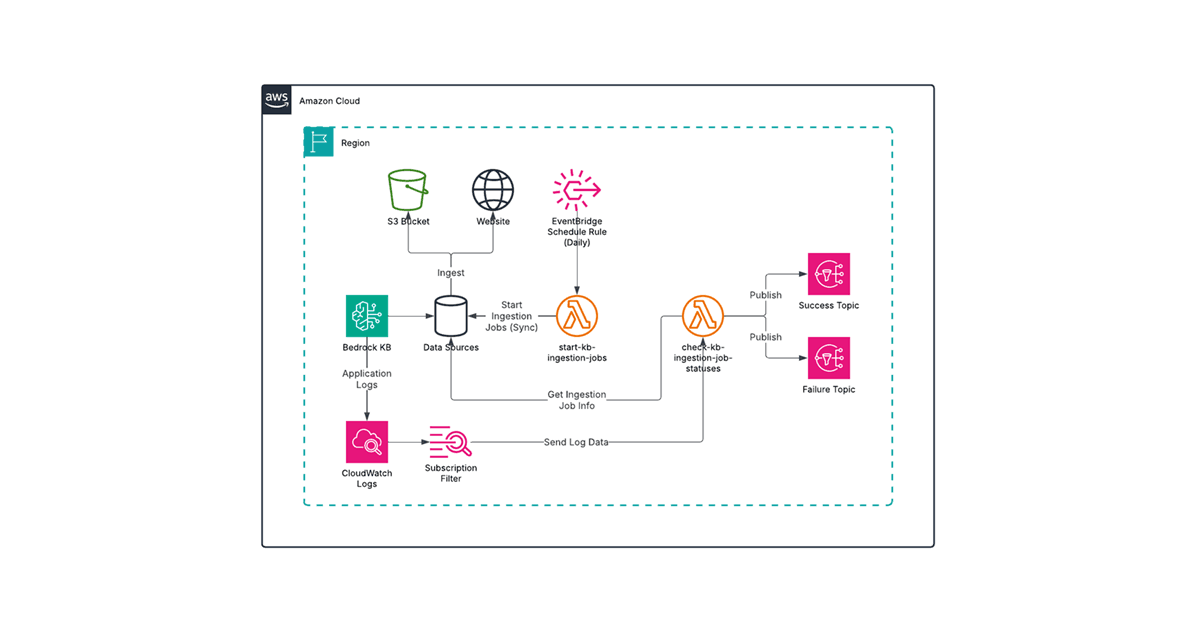

In the Bedrock console, we can see that the agent ForexAssistant is ready for testing. But we first need to upload the FX Global Code PDF file to the S3 bucket and do a data source sync. For details on these steps, refer to the blog post Adding an Amazon Bedrock Knowledge Base to the Forex Rate Assistant.

Using the test chat interface, I asked:

What is the FX Global Code?

It responded with an explanation that contains citations, indicating that the information was obtained from the knowledge base.

For good measure, we will also ask the forex assistant for an exchange rate:

What is the exchange rate from US Dollar to Canadian Dollar?

It responded with the latest exchange rate as expected:

And that's a wrap! Don't forget to run terraform destroy when you are done, since there is a running cost for the OpenSearch Serverless collection.

2_knowledge_base directory in this repository. Feel free to check it out and use it as the basis for your Bedrock experimentation.Summary

In this blog post, we developed the Terraform configuration for the knowledge base that enhances the forex rate assistant which we created interactively in the blog post Adding an Amazon Bedrock Knowledge Base to the Forex Rate Assistant. I hope the explanations on key points and solutions to various issues in this blog post help you fast-track your IaC development for Amazon Bedrock solutions.

I will continue to evaluate different features of Amazon Bedrock, such as Guardrails for Amazon Bedrock, and streamlining the data ingestion process for knowledge bases. Please look forward for more helpful content on this topic as well as many others in the Avangards Blog. Happy learning!